AWS Session Manager: A better way to SSH

Secure Shell (SSH) is a solid remote access tool. IMO it's a key enabling technology for distributed systems. However it's effective security is not ideal. It's authentication scheme with RSA key pairs and wire-level encryption is great. But key management is tricky, and opening the firewall(s) for bidirectional SSH (port 22) increases the attack surface.

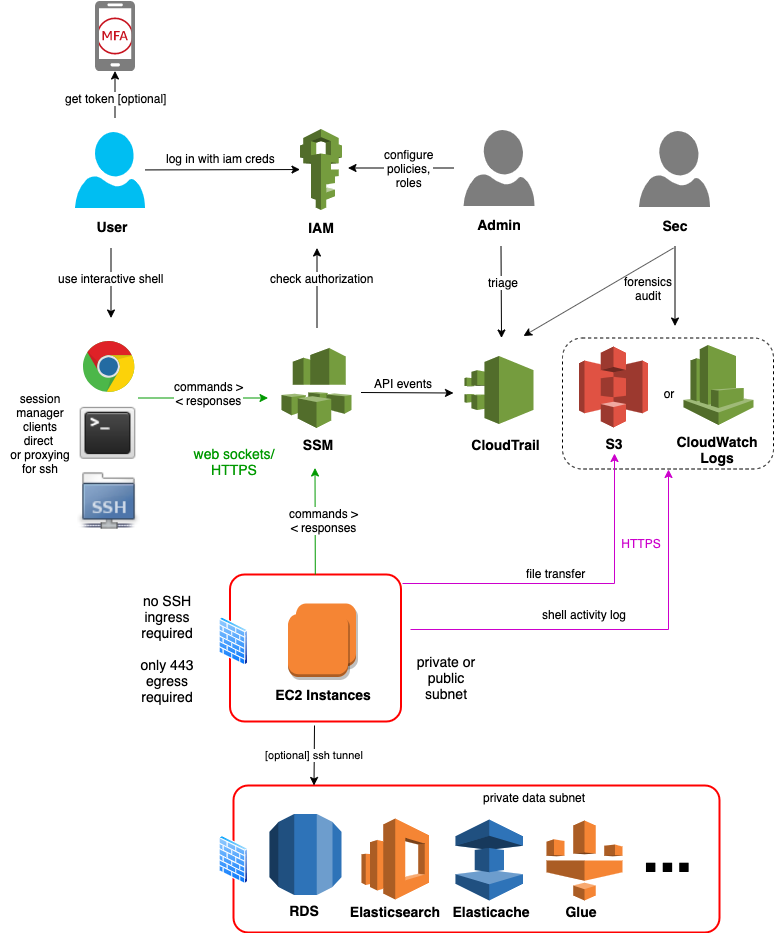

This article describes an AWS innovation introduced in 2019 called the session manager. Yes, you really can improve that mouse trap. The session manager adds to ssh a layer of authentication and authorization based on IAM and only requires HTTPS outbound on the server end. SSH rides on top of the Session Manager via SSH's proxy capability.

Now, remote host access might be on the wane. Cloud native is trending us towards managed platforms (cloud functions, serverless, container orchestrators), where it is discouraged, if not impossible, to start a shell. But for now, we still need it.

Let us level set first on SSH before we get into SSH proxied via the Session Manaager.

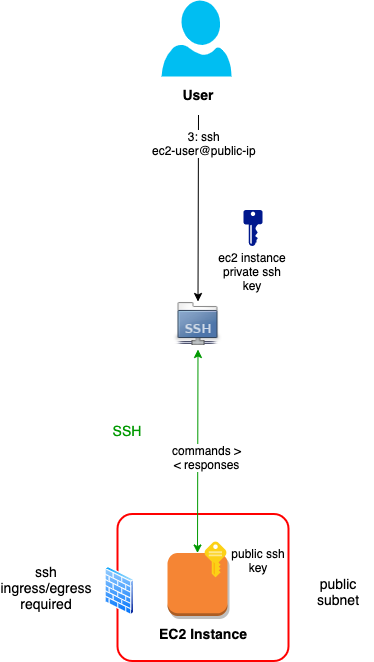

Straight SSH

Straight, plain-vanilla SSH has a client and a server component, communicating over the SSH protocol. A shell session is initiated to a server-side user account, and authenticated either by a password or private key whose public key has been pre-authorized on the server:

Note that ssh client is usually a bundle of tools: secure shell, file transfer and copy (ssh, sftp and scp). All three use the above architecture.

For reference, starting the session looks something like this:

ssh -i ~/.ssh/my-ec2-instance.pem ec2-user@{public-ip-or-fqdn}

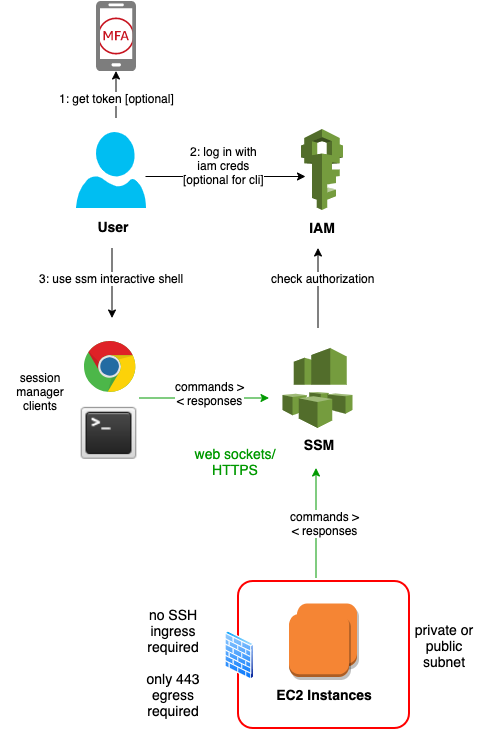

Direct Session Manager

AWS offers session manager clients as part of the AWS CLI (with an add on) and the Console (Browser interface). The client and server communicate over HTTPS and secure web sockets, via the AWS Systems Manager (SSM) gateway:

A few points to reemphasize here:

- There is effectively no risk of inbound attack. The firewall (security group and/or network acl) only needs an 443 outbound rule. The ssm agent on the EC2 Instances poll the gateway for session requests.

- You can remove jumphosts altogether, or at least move them to private subnets. These instances need no public IP, and outbound access is enabled via a NAT gateway.

- It's easier to harden authentication and manage credentials at scale. Console password rules (complexity, expiry), CLI access key rules (expiry) and use of MFA can be centralized. With AWS SSO or cross-account role assumption, each user has one set of credentials to use and rotate. Of course on and off-boarding is simplified as well.

- You can more easily authorize access. SSH keys are often shared across hosts for convenience. IAM policies can be configured to limit access based on instance ids, tags, subnets, etc. on a per-user, per-role or per-group basis.

- You can more easily audit access for forensics or integrate DevSecOps processes. Session lifecycle is logged in CloudTrail, and you can log to S3 the full shell history (both commands typed and responses displayed).

Session Manager Prerequisites

Client Device

IAM User

- An IAM policy. I like using to use this set:

{

"Version": "2012-10-17",

"Statement": [

{

"Action": [

"ssm:GetConnectionStatus",

"ssm:DescribeInstanceInformation",

"ssm:DescribeSessions",

"ssm:StartSession",

"ssm:DescribeInstanceProperties"

],

"Resource": "*",

"Effect": "Allow",

"Sid": "SessionManagerStartDescribe"

},

{

"Action": [

"ssm:TerminateSession"

],

"Resource": "arn:aws:ssm:*:*:session/${aws:username}-*",

"Effect": "Allow",

"Sid": "SessionManagerTerminate"

}

]

}

Note that the above allows a user to list all current and historical sessions. Last I checked (Nov 2019), you cannot restrict DescribeInstances to those started by the user, but it does fortunately restrict entry termination to those the user has started.

You have to choose between users being able to view all current sessions (but not enter or terminate them) and viewing none at all. Same for historical sessions.

EC2 Instance

-

An SSM agent. It's pre-installed with the Amazon Machine Images (AMI) for Windows Server, Ubuntu Server 16 and 18, Amazon Linux 1 and 2, and all the AL variants for Batch, ECS, ElasticBeanstalk, etc. However, it has taken AWS many months to roll out the latest agent that supports SSH Proxying. Last I checked (Nov 2020), Ubuntu 16 supports direct but not proxied ssh. (Run

snap refresh amazon-ssm-agentin your user data or other bootstrap mechanism to update it.) -

IAM policy via an ec2 instance profile (role). The AWS doc on this is a little scattered. I like to use this set:

{

"Version": "2012-10-17",

"Statement": [

{

"Sid": "SessionManagerCore"

"Effect": "Allow",

"Action": [

"ec2messages:AcknowledgeMessage",

"ec2messages:DeleteMessage",

"ec2messages:FailMessage",

"ec2messages:GetEndpoint",

"ec2messages:GetMessages",

"ec2messages:SendReply",

"ssm:UpdateInstanceInformation",

"ssm:ListInstanceAssociations",

"ssmmessages:OpenDataChannel",

"ssmmessages:CreateDataChannel",

"ssmmessages:OpenControlChannel",

"ssmmessages:CreateControlChannel"

],

"Resource": "*",

}

}

If you want to log session data (shell history), you'll need another set of permissions, e.g. documented here.

Assign the profile (role) to the ec2 instance. If you assign the role after the instance started, bounce the ssm agent to get it connected to the backend.

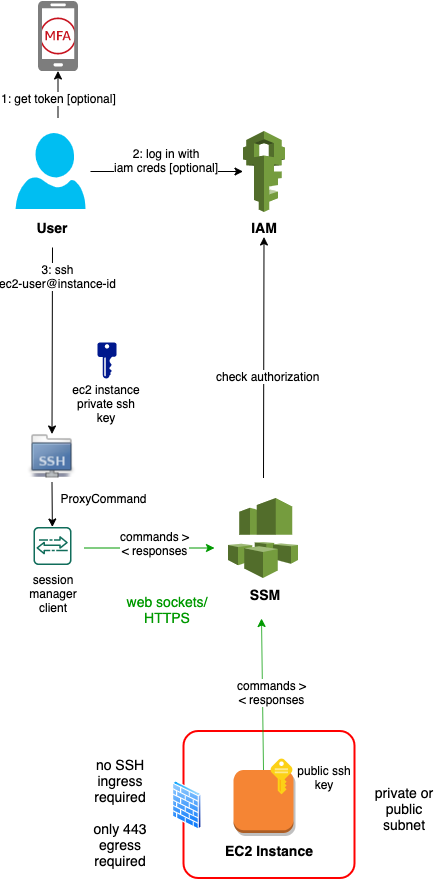

Proxied SSH

Now let's bring in our trusty ssh, scp and sftp.

You can also use this approach for:

- custom users with non-default authorized ssh keys

- custom users with password only authentication

The proxy config for Linux (~/.ssh/config):

# SSH over Session Manager

host i-* mi-*

ProxyCommand sh -c "aws ssm start-session --target %h --document-name AWS-StartSSHSession --parameters 'portNumber=%p'"

For Windows/OpenSSH (C:\Users\{username}\.ssh\config):

# SSH over Session Manager

host i-* mi-*

ProxyCommand C:\Windows\System32\WindowsPowerShell\v1.0\powershell.exe "aws ssm start-session --target %h --document-name AWS-StartSSHSession --parameters portNumber=%p"

If you have multiple aws profiles configured, export (set in Windows Powershell) the AWS_PROFILE variable prior to starting the session.

So now we can use the instance id, instead of the public ip or fqdn:

ssh -i ~/.ssh/my-ec2-instance.pem ec2-user@{instance-id}

It will look and feel just like a straight ssh session. Occassionally starting the session takes a few seconds, but the performance from there on is just as good as ssh, in my experience.

All the other facilities, like scp and sftp, and remote execution of a command, are available as well. Also you can use the bundled ssh-add utility to store your keys in a cache and drop the -i option.

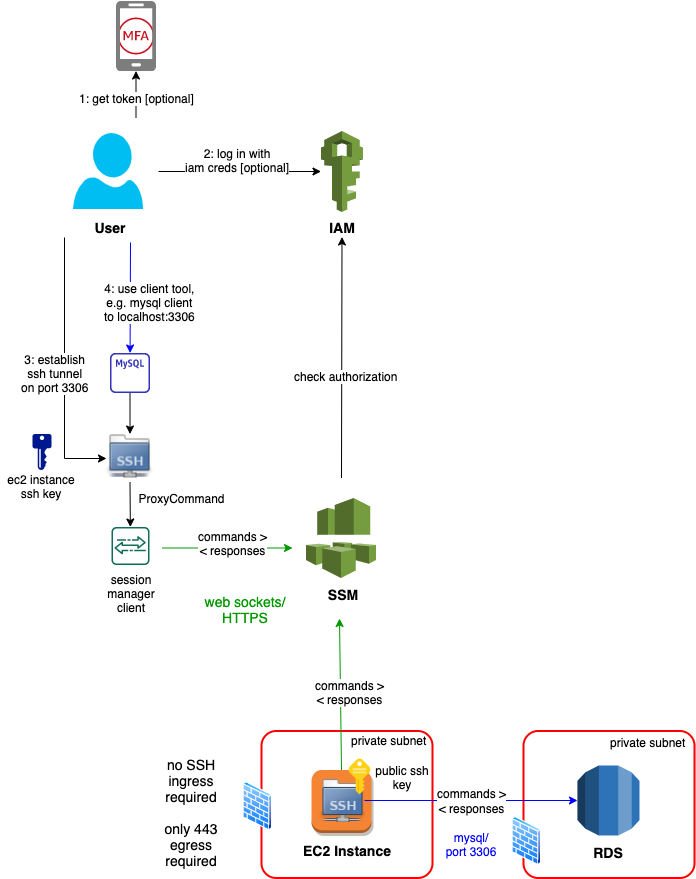

Proxied SSH Tunnel

What about access to data stores, like private RDS or Elasticsearch/Kibana instance? You can use your client tools, like a MySQL Workbench in the case of RDS MySQL, or a browser in the case of Kibana. You connect them to a local port, e.g. 3306 or 8443, that is an open ssh tunnel riding on the session manager.

The following diagram depicts access from the MySQL CLI client to a MySQL RDS:

Authorization

You can amend the user policy to restrict access. My two favorites follow.

- Whitelist specific people in a common policy:

...

{

"Sid": "SessionManagerDenyRestricted",

"Effect": "Deny",

"Action": "ssm:StartSession",

"Resource": [

"arn:aws:ec2:*:*:instance/i-0cdbc0106ed03bba5",

"arn:aws:ec2:*:*:instance/i-07e8b9fc88ee02bf8"

]

"Condition": {

"StringNotEquals": {

"aws:username": "first.last1",

"aws:username": "first.last2"

}

}

}

Or you could use a group or role reference.

- Whitelist categories of instances in a user- or group-specific policy:

...

{

"Sid": "StartSessionResourceTag",

"Effect": "Allow",

"Action": "ssm:StartSession",

"Resource": "arn:aws:ec2:*:*:instance/*",

"Condition": {

"StringEquals": {

"ssm:resourceTag/Purpose": "jumpbox"

}

}

}

Of course you then have to restrict ec2 instance tag updates to admins.

Refer to these quick start policies for more.

Audit

By default, all session start and end is logged to CloudTrail, like all AWS service activity. You could enable some DevSecOps process on using CloudWatch event rules, like looking for .

You can enable session data logging to an S3 bucket, but take care! The content is highly sensitive. For example, users may type passwords in shell command arguments and cat files with PHI data. Therefore the logs must be sent to a well guarded bucket, e.g. in an audit account that is only allowed temporarily access by forensics investigators.

Conclusion

Putting it all together:

AWS Session Manager enables us to:

- harden our systems (reduce the attack surface, use multi-factor authentication and fine-grained authorization)

- centralize identity and access management

- centralize audit

And all this with no degredation in the user experience.

IMO, it's a win-win for dev, ops and security teams.

Published Dec 10, 2020

Comments? email me - mark at sawers dot com