Autonomy in the Cloud

How do we take advantage of cloud benefits, like cost, agility and resilience, but avoid the primary tradeoff — vendor lock-in? I present a framework to select self- versus cloud-managed services.

Introduction

Using a cloud provider's managed services and Platform-as-a-Service (PaaS) frameworks can accelerate your business, but you risk losing autonomy, and in limited cases, can incur higher costs. In this article I discuss my strategy for balancing these forces. It's not quite a full decision framework but it kickstarts an evaluation. Perhaps you can use it as a starting point for your own methodology.

Mind Shift

Technology selection criteria in the cloud is very different than in a traditional Data Center. Perhaps because of vastly increased transparency and control, cost, in all its forms, is front and center. (Also we may no longer may have separate hardware and networking teams, so solution or platform-focused architects are taking up these decisions).

The cost profile is radically different. In many cases I see cost surpassing features in significance in the cloud context. We will trade a more narrow feature set for a much cheaper, and different cost profile. For basic Infrastructure-as-a-Service (Iaas) like compute and storage, we trade CapEx (long term fixed) for OpEx (short-term variable) expenditures, gaining massive flexibility. For example, we can make sizing decisions for this month's load, instead of next year's. Managed services, for example a database, can work the same way — pay as you go (per transaction, hourly or monthly billing) instead of an one-time or annual fee to a vendor.

Infrastructure

I'll assume that you buy into using cloud for core infrastructure: compute, storage and networking. This is all undifferentiated heavy lifting — plumbing — that we want to outsource.

I also go even farther to higher-level networking stuff — IDS/IPS, WAFs, load balancers, DNS and so forth — but I may be biased, since these are things I don't personally have experience selecting and operating that layer.

On storage, you don't have much of a choice with root volumes and ephemeral drives, but you do with network mounted shared storage and object stores. I don't believe providers enable customers to set up their own SAN or NAS in the cloud. Instead, you could:

- Set up a virtual machine (EC2 Instance on AWS) to act as a central file server and NFS mount its drives

- In AWS, EFS is a relatively performant but fairly expensive option, and supports a standard NFS interface (i.e. you can mount as a file system).

- Use the much cheaper Simple Storage Service (S3) and its proprietary interface. It also has lifecycle management tools to downgrade reliability and accessibility in favor of costs, CDN integration (CloudFront), native HTTP access (but with primitive security), and IAM integration down to the object level.

- At WebomateS, we're exploring a hybrid, S3FS-FUSE, a package that can mount S3 buckets as a proper file system on an EC2 Host. Please email me (mark at sawers dot com) if have experience with it. I like this option because it abstracts the storage implementation, providing portability across clouds.

- Move it all into your data center. Dropbox migrated off S3 some time back. Netflix hosts consumer endpoint content streaming. Of course, those capabilites are core to their business, and at their scale it makes sense to go that way.

If you haven't guessed it yet, my strategy is to operate as little infrastructure and platform services as possible. Intercom calls this approach 'Run Less Software' (see the SE Daily podcast with Rich Archbold).

So let's look at higher level layers like distributed data stores (databases and caches), message brokers, systems (cluster and container orchestration), and so on.

Services

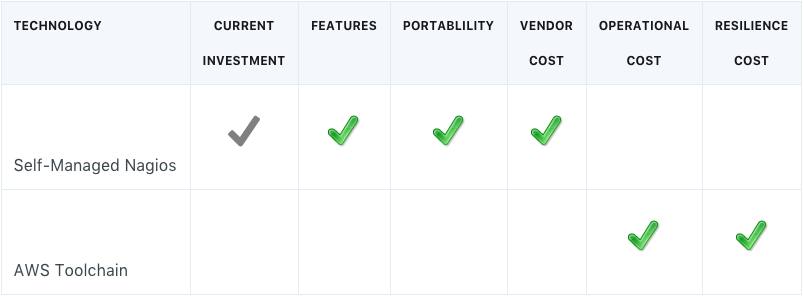

Generally, we are considering two options here: managing your own open service on the cloud IaaS, be it home-grown, Free and Open-Source Software (FOSS) or commercial, versus a cloud-provider managed service. Management activites are hosting, securing, scaling, failing over, patching, backing-up and other operating activities, at both OS and service layers. I consider six criteria or dimensions, and line this up in a matrix, marking each cell to sum into a rough winner.

The criteria are:

- Current Investment: Is there an investment in the current service, lots of code and/or scripts and/or years of team development and operating knowledge?

- Features: Which has significantly better features?

- Portability: Could we lift-and-shift it to another cloud provider? If not, have we or could we create an inexpensive abstraction layer for a cloud-proprietary service and swap it for another with a configuration change?

- Vendor Cost: Which is significantly lesser, the cloud, traditional COTS vendor or FOSS?

- Operational Cost: Which has significantly lesser overhead to maintain, back-up, patch, etc.

- Resilience Cost: Which has significantly lesser effort to make it highly available, resilient to both failure and elastic demand.

Examples

Now I'll run through some examples, referencing the decisions I've helped make for WebomateS' AWS cloud.

Matrix Notation

Below I use a table to capture the evaluation of two options and their significant differences. Here's what I mean by the markings:

- A gray check is a slight advantage

- A green check is a clear advantage

- No check in the column means no significant difference to either option

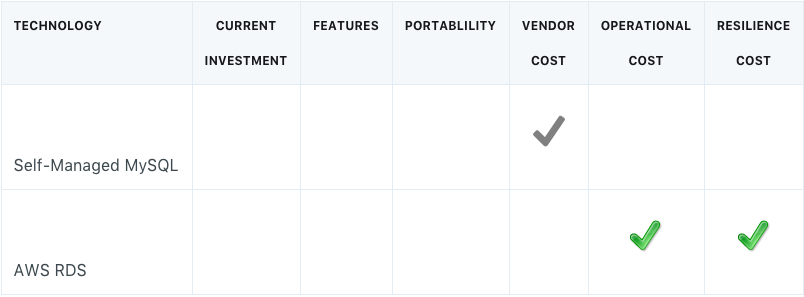

Relational Database

Here's the easiest decision possible: a cloud-managed FOSS or inexpensive relational database.

You can easily run the db yourself in another cloud, but most likely they have a managed offering as well. It's a small monthly cost to pay for a huge jump in availability (e.g. master failover to another Availability Zone) and scale (read replicas). And all the deployment, upgrades, log rotation, backups, patching is all taken care for you.

It's a no-brainer.

Likewise a SQL data warehouse like AWS' RedShift. All of your client interaction should be standard SQL.

Same goes with a FOSS cache service, like Memcached or Reddis. Go with the AWS managed service ElastiCache. Your client interaction uses the standard APIs.

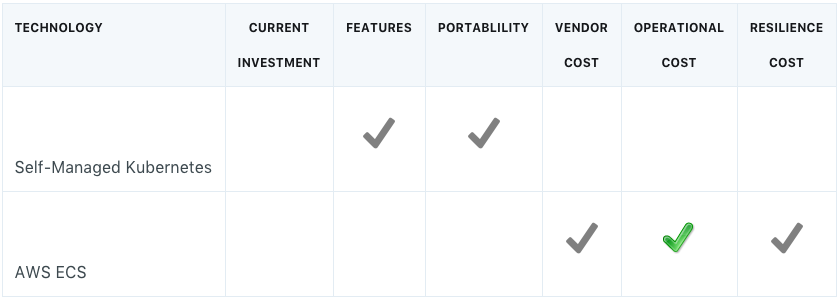

Container Orchestration

Yes, Kubernetes is da bomb and I eagerly await AWS' Elastic Kubernetes Service (EKS) General Availability (GA), but until then we'll run on AWS Elastic Container Service (ECS). This is plumbing, and running this would distract the team from generating core value for customers.

ECS wins on cost, since to be safe we need three extra hosts to run the Kubernetes masters. Then there's the upkeep of those masters plus the node agents. ECS wins slightly on resilience, as it is multi-AZ right out of the box.

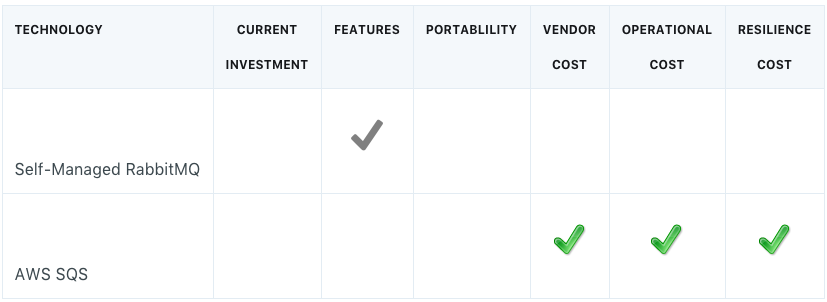

Message Broker

Here's another plumbing element that is quite a bit of effort to make resilient to failure, highly available and scalable. AWS offers a proprietary service called Simple Queue Service (SQS) and it's feature set is good enough. It's also wicked cheap - the first one million messages per month are free and no data transfer fees in or out from EC2 instances.

I set Portability as a draw. It was a simple extension to an internal message library to add SQS support. If need be, RabbitMQ could be set up in another cloud, and clients are simply started up with a different environment variable.

Now let's look at three cases that aren't so easy to call.

NoSQL Database

If I had a case for a non-relational or a multi-master store, I would think long and hard about whether I would marry AWS' DynamoDB. Sure it's inexpensive, resilient and scalable, but it's a proprietary data model and interface, more so into query tuning and index optimization.

On the other hand, are you ready to invest in running your own MongoDB? If you're doing it for multi-master, then I suspect you're going to be investing significant effort. If you're Netflix, you can afford to run your own Cassandra. For contrast, Intercom, the run less guys, went for DynamoDB.

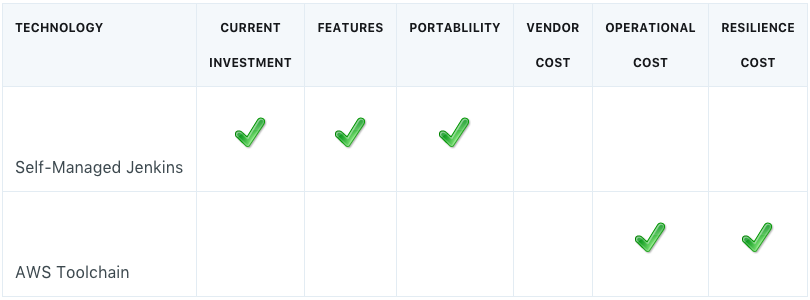

Build Server

AWS has a family of tools in this arena: CodeCommit, CodeBuild, CodeDeploy, CodePipeline. But WebomateS had a significant comfort level with BitBucket and Jenkins. The light-lifting was in building EC2/ECS deploy scripts.

Cost was a draw. Since the team didn't need scale or HA for the foreseeable future (a single decent EC2 host is enough), resilience didn't sway.

Monitoring

For familiarity, capability and cost, WebomateS went with Nagios over CloudWatch. Nagios provides deep OS metrics and has no per metric charge.

Summary

Decision matrices are great tools for adding rigor to technology selection and architectural evaluation. The six dimensions presented here are core to building such a maxtrix. These decisions on using cloud-managed services are really a small variation on the classic buy vs build tradeoff evaluation. I think you'll find that in most cases it makes more sense to offload as much as possible to the cloud provider. This frees you to focus on delivering value to your customers.

Published Jan 23, 2018

Revised Jan 24, 2018

Cloud icon made by Jojo Mendoza, CC 3.0 BY license

Comments? email me - mark at sawers dot com